Research Article

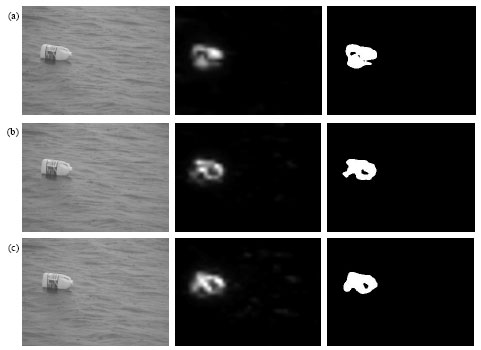

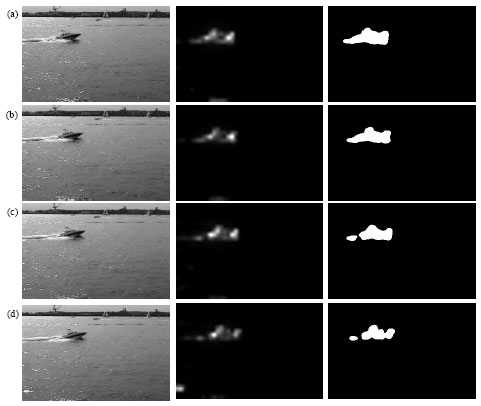

Moving Target Detection in Complex Background

College of Information and Communication Engineering, Harbin Engineering University, Harbin 150001, China

Xian-Rui Song

College of Information and Communication Engineering, Harbin Engineering University, Harbin 150001, China