Research Article

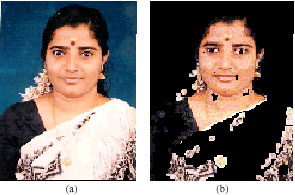

Domain Specific View for Face Perception

PG and Research Department of Computer Science, DG. Vaishnav College, Arumbakkam, Chennai-600 106, Tamil Nadu, India

T. Santhanam

PG and Research Department of Computer Science, DG. Vaishnav College, Arumbakkam, Chennai-600 106, Tamil Nadu, India