Research Article

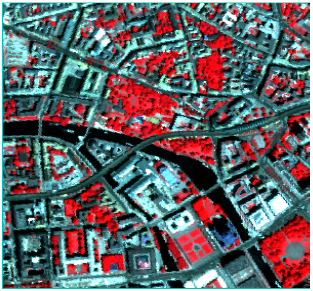

Classification of High-resolution Remotely Sensed Images Based on Random Forests

Faculty of Information Engineering, China University of Geosciences (Wuhan), 430074 Wuhan City, China

Xinwen Cheng

Faculty of Information Engineering, China University of Geosciences (Wuhan), 430074 Wuhan City, China