Research Article

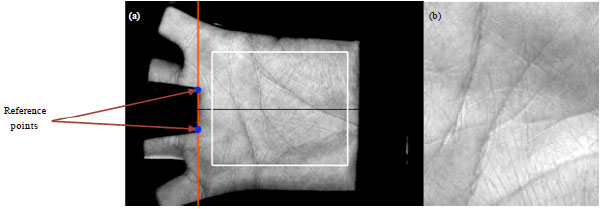

Personal Identification System Based on Palmprint

Department of Electronics and Communication Engineering, Vishnulakshmi College of Engineering and Technology, Coimbatore, Tamil Nadu, 641045, India

R. Shanmugalakshmi

Department of Computer Science Engineering, Government College of Technology, Coimbatore, Tamil Nadu, 641045, India