Research Article

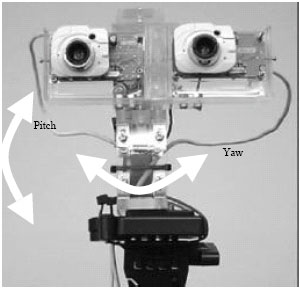

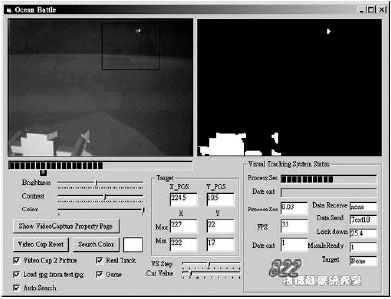

A Real-Time Vision Tracking System Using Human Emulation Device

Department of Mechanical Engineering, Tatung University, 40 Chung-Shan North Rd. 3rd. Sec., Taipei, Taiwan 104, Republic of China

Kuei-Shu Hsu

Department of Applied Geoinformatics, Chia Nan University of Pharmacy and Science, Taiwan 717, Republic of China

Ming-Guo Her

Department of Mechanical Engineering, Tatung University, 40 Chung-Shan North Rd. 3rd. Sec., Taipei, Taiwan 104, Republic of China