Research Article

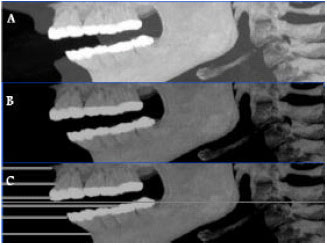

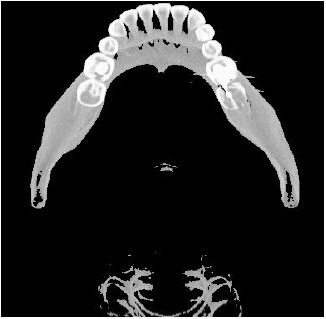

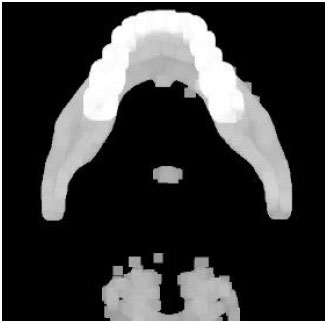

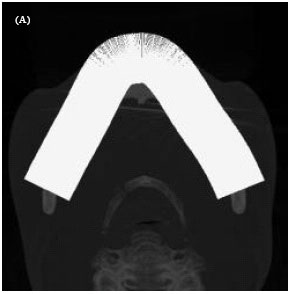

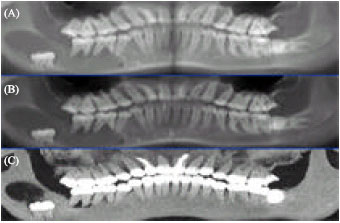

Fully Automatic Extraction of Panoramic Dental Images from CT-Scan Volumetric Data of the Head

Control and Intelligent Processing Center of Excellence, Faculty of Engineering, School of Electrical and Computer Engineering, University of Tehran, Tehran, Iran

R.A. Zoroofi

Control and Intelligent Processing Center of Excellence, Faculty of Engineering, School of Electrical and Computer Engineering, University of Tehran, Tehran, Iran

G. Shirani

Department of Oral and Maxillofacial Surgery, Faculty of Dentistry Medical Science,University of Tehran, Tehran, Iran

Mayank Sharma Reply

Nice approach sir

I am following the your paper for similar work.

But i am stuck at one point.

Is segmentation is done at whole set of volute as a 3d segmentation or is it at individual images ?

Which algorithm you used for segmentation ?