Research Article

Interest Based Recommendations with Argumentation

Department of Computer Science, University of Delhi, Delhi, India

Pooja Vashisth

Department of Computer Science, University of Delhi, Delhi, India

INTRODUCTION AND MOTIVATIONS

User support systems have evolved in the last few years as specialized tools to assist users in a plethora of computer-mediated tasks by providing guidelines or hints. Recommender systems are a special class of user support tools that act in cooperation with users, complementing their abilities and augmenting their performance by offering proactive or on-demand, context-sensitive support (Konstan, 2004). Recommender systems or agents are information filtering autonomous agents that suggest information items which are likely to be of interest to the user. The inference and explanation process which led to such suggestions is mostly unknown. Although the effectiveness of existing recommenders is remarkable, they still have certain limitations as they are incapable of dealing formally with the ever changing user’s preferences in complex environments. Decisions about user preferences are mostly based on ranking previous user choices or gathering information from other users with similar interests. There is no method of capturing the reasons beyond a particular preference or some change in it. The systems are not well equipped with explicit reasoning or understanding capabilities. The absence of an underlying formal model makes it hard to provide users with a clear explanation of the factors and procedures that led the system to come up with particular recommendations. In fact, a recommender should be able to locate the reasons behind changing user preferences or why a particular suggestion was not of interest for a particular user. This would help in understanding user’s requirement. Logic-based approaches could help to overcome these issues, by enhancing recommendation technology in order to provide a means to formally express user requirements and to draw inferences. In this study, frameworks based on the Belief-Desire-Intention agent architecture for argumentation can further empower recommendation systems by providing appropriate inference mechanisms for reasoning beyond the user preferences and said recommendations. In fact, the argumentation paradigm has proven to be successful in a growing number of real-world applications such as multi agent systems, legal reasoning and semantic web (Chesnevar et al., 2009), among many others. This study proposes an argument-based framework for generating Interest-Based Recommendations (IBR) in a multi agent environment. We refer the recommendations as interest-based because the proposed framework will generate suggestions which are desirable to the users and satisfy their motivational attitudes (like desires, goals, preferences, etc.). The user and the IBR agent will be able to give justifications in the form of arguments. This enables the agents to know the reasons behind an unexpected output. The use of argumentation will allow enhancing recommender systems with inference abilities to present the underlying motives and well explained suggestions. In this study, we make two important contributions towards automated recommender systems. Firstly, we show that an interest-based recommendation requires explicit representation of the relationships between user’s preferences, goals, beliefs and recommendations. For this, we present a framework that captures various relationships between user’s preferences, goals, sub-goals, beliefs and recommendations. Secondly, we analyze the attacks on different arguments and show how these arguments may influence the agent's adopted goals and consequently its preferences over possible recommendations. We also enabled the recommender agent with argumentation so that it can justify its suggestions and understand the needs of a user in order to generate interesting recommendations.

AGENTS AND ARGUMENTATION IN RECOMMENDER SYSTEMS

In the last few years the Artificial Intelligence (AI) community has carried out a great deal of work on recommender systems (Niknafs and Band, 2010; Bedi et al., 2009b). This kind of systems can help people to find out what they want or suggest items of interest to them, especially on the Internet (Banati et al., 2006). Agent technology becomes invaluable by appreciating the facts that we expect these systems to take personal preferences into account and to infer and intelligently aggregate opinions (Bedi and Chawla, 2010) and relationships from heterogeneous sources and data (Niinivaara, 2004). Recommender applications can be valuable tools supporting, for example, information search and decision making. These systems have to take care of diverse and changing user’s preferences and restrictions. Because of this variety, the recommendation systems can be treated in different levels of complexity and the knowledge-based approaches are very suitable (Felfernig and Gula, 2006; Felfernig et al., 2008). Though suitable but the knowledge-based approaches do not reason beyond user’s particular requirements and preferences. Besides this, they would keep the human user engaged for quite a long during the interactive session required for repair activity. Several architectures have been proposed to give agents a formal support. Among them, a well-known intentional formal approach is the BDI architecture proposed by Rao and Georgeff (1995). This model is based on the explicit representation of the agent’s Beliefs (B), its Desires (D) and its Intentions (I). Indeed, this architecture has evolved over time and it has been applied in development of several significant multi-agent applications (Casali et al., 2007; Schlesinger et al., 2010). IBR is not an isolated effort towards flexible recommendation systems. A recent research in recommender technologies involved in the development of an approach for integrating argumentation in recommendation systems by Chesnevar et al. (2009). This approach resulted in enhancing recommendation technologies through the use of qualitative, argument-based analysis. Argumentation is useful in resolving conflicts due to preferences, beliefs, desires and intentions as well. Amgoud (2009) proposes an abstract argumentation-based framework for decision making. Their framework motivated us to use argumentation to identify the underlying mental attitudes of an agent that are responsible for certain decisions. Present study concentrates on situations where the agents' limited knowledge of each other and of the domain makes it essential for them to be, in a sense, cooperative and understand each other’s interests to achieve the best outcome. On the similar lines is the work by Rahwan (2004) in the field of interest-based negotiation where agent preferences were not predetermined or fixed. We derived inspiration from the same to take present day recommendation one step ahead, that is beyond the user’s explicit preferences to the reasons, motives and interests lying behind them.

RECOMMENDER SYSTEMS

Recommender systems are information filtering systems that recommend information items which are likely to be of interest to the user. They are aimed at helping users to deal with the problem of information overload by facilitating access to relevant items. User support systems operate in association with the user to effectively accomplish a range of tasks. Therefore, these systems act in cooperation with users, complementing their abilities and augmenting their performance by offering proactive or on demand context-sensitive support. They usually operate by creating a model of the user’s preferences (user modelling) or the user’s task (task modelling) with the purpose of facilitating access to items that the user may find useful (Al-Murtadha et al., 2010). Two main techniques used in literature to compute recommendations are content based and collaborative filtering approaches. A combination of collaborative-filtering and content-based recommendation gives rise to hybrid recommender systems (Burke, 2002). Although large amounts of qualitative data is available on the web in the form of rankings, opinions and other facts, this data is hardly used by existing recommenders to perform inference. Even quantitative data available on the web could give rise to highly reliable and traceable suggestions if used by a system with the ability to perform qualitative inference on this data. Current recommendation technologies can be improved if they are provided with the ability to qualitatively exploit these data and reason beyond user preferences. This gives rise to a number of research opportunities in recommender systems: like exposing underlying assumptions, dealing with the defeasible nature of user’s preferences (Bedi et al., 2009a), approaching trust and trustworthiness (Bedi and Kaur, 2006; Bedi and Vashisth, 2011) and proving rationally compelling arguments.

A solution to some of these research problems can be provided by integrating existing user support technologies with appropriate inferential mechanisms for qualitative reasoning. As we will see in the next sections, the use of argumentation allows enhancing multi-agent recommender systems with inference abilities to present the deeper motives and reasoned suggestions.

Argument-based recommendation technologies: We contend that argument-based reasoning can be integrated into interest-based recommender systems in order to provide a qualitative perspective in decision making at both the ends. This can be achieved by integrating inference abilities to offer reasoned suggestions modeled in terms of arguments in favor and against a particular decision. This approach complements existing qualitative techniques by enriching the user’s mental model of such computer systems in a natural way. They generate suggestions as statements which are backed by supporting arguments (Chesnevar et al., 2009). Clearly, conflicting suggestions may arise and it will be necessary to determine which suggestions can be considered as valid according to user’s preferences. If in case, there are conflicts due to the motivational attitudes of the agents then again argumentation can be used to resolve the same. The recommender agent can use this additional information to generate new interesting recommendations for the user. The role of argumentation is to provide a sound formal framework as a basis for such analysis. In this study, present proposal is based on modeling user’s preference criteria in terms of a hybrid recommender agent program built on the BDI architecture. In such a setting user’s preferences and background knowledge can be codified as beliefs, desires, goals, facts, strict rules and goal-based rules in a BDI agent program.

INTEREST BASED RECOMMENDATIONS (IBR)

In multi-agent systems, agents often need to interact in order to fulfill their objectives or improve their performance. One type of interaction that is gaining increasing prominence in the agent community is recommendation. Therefore, recommendation is a form of interaction in which an agent(s) assist users by communicating to provide information about the relevant items where there is an information overload. These agents desire to cooperate even with conflicting interests.

We hence informally define an interesting recommendation for a user agent as an ordered set of desirable options that satisfy its motivational attitudes. This study proposes an argument-based framework for generating Interest-Based Recommendations (IBR). This allows the satisfaction of deeper interests. Interest-based recommendation allows agents to exchange additional information and correct misconceptions during interaction. Agents may argue about each other's beliefs and other mental attitudes in order to (1) justify their positions and (2) influence each other's positions. This may be useful, for example, in consumer commerce. That is because consumers may make uninformed decisions based on false or incomplete information. Consumer preferences can be shaped and changed as a result of the interaction with potential sellers and perhaps with other people of potential influence such as family members or other consumers. Game-theoretic and traditional economic mechanisms have no way to represent such interaction as they work on pre-determined and fixed preferences. Hence, we adopted an argument-based framework to generate interesting recommendations (interest-based recommendations) for the user agents. The proposed framework identifies essential features required to enable IBR using argumentation among autonomous agents. It identifies and deduce arguments for beliefs, desires and intentions behind the generated recommendations and user preferences. It identifies different conflicts among agents and ways of resolving them.

Argumentation in IBR: A goal-directed recommendation required by a user agent may be informally defined in terms of the suggestion(s) that user agent (agent U) wants to acquire from the recommender agent (agent R) to satisfy its goal. For example, a customer’s automated agent (called as user agent ‘U’ in this study) might approach a travel recommender agent because the customer wants to go on a vacation in a specific time period say from 12th December 2011 till 17th December 2011. The most preferred location for him is Goa, followed by Kanyakumari and then Manali. Therefore, to satisfy the ultimate goal of going on a vacation, his present goal is to buy air tickets to Goa, subject to specific budget and time constraints. Certainly, user agent’s top goals, related preferences and constraints are mentioned in the customer’s profile provided to the recommender agent R.

Example 1a: Goal-directed recommendation leading to no suitable option.

| • | U: I would like to buy air tickets to Goa please |

| • | R: The best offer available is for $300 |

| • | U: I reject! How about $200? |

| • | R: I reject! Sorry there is none |

This dialogue ended with no result because the option generated by the recommender was not economical and so did not satisfy the user. Let us now consider a variant of the above example. Suppose, this time, that the user might concede and pay $275 but would be less satisfied. Suppose also that the recommender could also get an air ticket offer for $275.

Example 2a: Goal-directed recommendation leading to sub-optimal option.

| • | U: I would like to buy air tickets to Goa please |

| • | R: The best offer available is for $300 |

| • | U: I reject! How about $200? |

| • | R: I reject! Sorry there is none. How about an air ticket offer for $275? |

| • | U: I guess that's the best I can do! I accept! |

In this case, the user gets a sub-optimal outcome from the recommender. This results in a different, less satisfactory position for the user. The deficiencies of conventional way of recommendation lie mainly in the underlying assumptions about agents' preferences over possible agreements. In particular, it assumes each user’s (agent) preferences are complete and fixed so that these will not change. However, there are many situations in which agents' preferences are incomplete and improper. An agent's preferences may be incomplete for a number of reasons. A consumer, for example, may not have preferences over new products/offers, or products he is not familiar with. During the extended interaction with the recommender agent, the user may acquire the information necessary to establish changed or new preferences.

A solution: Interest-Based Recommendation using argumentation: Consider the following alternative to the dialogues presented in examples 1a and 2a. Let us assume that the recommender suggests a change in preference for dates by recommending a discounted air ticket to Goa for the user for the same or even a cheaper price say $175, to meet the goal of going on a vacation to Goa, if the user can plan to leave on 19th December 2011 to Goa instead of 12th December 2011.

Example 3a: Interest-based Recommendation leading to optimal suggestion.

| • | U: I would like to buy air tickets to Goa please |

| • | R: The best offer available is for $300. |

| • | U: I reject! How about $200? |

| • | R: I reject! Sorry there is none. Why do you need to go to Goa? |

| • | U: I want to go on a good, economical vacation |

| • | R: You can also go to Goa on 19th December 2011 if possible! I can recommend you an air ticket with XYZ airlines for $175, at a discounted rate for the suggested date |

| • | U: Great, I can manage that! I accept! |

In this example, it was possible to reach an option that satisfied the user. This happened because participants discussed the underlying interests (the user's interests in this particular case were to go on a good and economically cheap vacation). This was achieved by asking the user to shift the specific time period of vacation (if possible), as some airlines discounts were available on those dates. Therefore, an interest-based recommender system aims to: (1) discover the true sources of conflict, whether these are genuine conflicts between agent’s basic desires, resolvable conflicts between their plans, or simply a result of incorrect choices caused by ignorance and then; (2) find appropriate means to resolve such conflicts by choosing the most preferred economical option to fulfil agent’s desires.

Identifying and resolving conflict in IBR: To enable agents to discuss their interests and discover the underlying conflicts and compatibilities between these interests, it is essential to have an explicit representation of these interests. As we have seen in example 1a and 2a the conflict was due to the user’s goal of going to Goa and on the other hand he desired the trip to be economical in a specific time period which happens to be a festive and expensive season for Goa Tourism. The precise meaning of interests can be highly domain specific. But in general, we can view interests as the underlying reasons for choice. Choices are usually fundamentally motivated by one's intrinsic desire. In Fig. 1 we see that an agent requires recommendation because it wants to select the best available option compatible with its preferences, to achieve certain goals. And these goals are adopted because they contribute to achieving the agent's fundamental desires. Desires themselves are adopted because the agent believes it is in a particular state that justifies these desires. The following example clarifies the above view of agent reasoning.

| |

| Fig. 1: | Abstract view of relationship between desires, beliefs, goals and recommendations |

Example 4a: A customer (user) wants to go on a holiday (a super goal) therefore, he requires an economical (preference) air ticket in order to go to Goa (a goal) which in turn achieves the agent's wish to have a cheap and good vacation in the specified time period (a desire). The customer (user) desires to go to Goa during the preferred time period because he believes he can take a vacation and visit the Basilica of Bom Jesus to view the mortals of St. Francis Xavier during that period (a belief that justifies the desire).

Addressing preference conflict by discovering and manipulating goals: The most fundamental form of conflict in recommendation is over user preferences specified for a goal. Most of the times these preferences are conflicting or inconsistent. The direct way of resolving such conflicts is by, each agent exploring the alternative preferences either individually or mutually, to satisfy their goal (s). This was shown in example 3a, where each agent started by presenting his/her most preferred option and then conceded by accepting an alternative, less preferable option for scheduling the trip (date) (exchanging the holiday date to Goa from 12/12/11 to 19/12/11 for an air ticket worth $175). In this method for conflict resolution, an agent accepts an alternative option that achieves its (fixed) goals. This implies that after learning a new preference the recommender can generate other interesting options as well. As we saw in example 3a, the user states that he prefers to go to Goa because he wants a vacation. So, essentially, after exploring the immediate underlying goal, a recommender agent can propose novel alternative option to its user. Therefore, the difference between this and a normal concession as in example 2a, however, is that the user did not initially know of the right alternative of rescheduling the trip dates. Note that according to example 4a above, the choice to buy an air ticket is fundamentally motivated by the desire to visit the mortals of St. Xavier. However, in example 3a, it was sufficient for the user to inform the recommender of the immediate goal, namely to go to Goa on an economical vacation. But, if required, agents can explore each others goals in more detail. As we will see in example 5a, agents can also attempt to influence each others goals in order to resolve conflicts. Consider the following dialogue, in which the user reveals more information about his goals:

Example 5a: Influencing goals (continued from example 3a when schedule can’t be changed).

| • | R: What are your particular interests during the vacation in Goa? |

| • | U: I want to visit Basilica of Bom Jesus to view the mortals of St. Francis Xavier |

| • | R: But Basilica of Bom Jesus is closed during 1/12/11 to 31/12/11 this year |

| • | U: I didn't know about this! Do you have more details? |

| • | R: It is under construction, source: Goa Tourism |

| • | U: I need to re-plan the vacation |

| • | R: How about vacation in Kanyakumari (user’s next preferred location for a holiday as mentioned in the user profile provided to the recommender agent R)? |

| • | U: Are the air tickets to Kanyakumari economical? |

| • | R: The cheapest air fare available is $200? |

| • | U: Well in this case, I accept a vacation in Kanyakumari! |

In this example, the user explains the inference that lead to the desire of having a vacation in Goa in the first place. The recommender attacks the premises of that inference, thus causing the user to change its desire itself. The user now desires to visit Kanyakumari for an economical vacation. To achieve that desire, the user must adopt an alternative goal to go to Kanyakumari which then leads to accepting Kanyakumari deals. This type of conflict resolution is informally depicted in Fig. 2, where agent U changes his desire as a result of changing his beliefs. Such conflicts can be resolved by the agents by using persuasion dialogue during recommendation to change the conflicting beliefs of each other. This dialogue is required as agents have conflicting beliefs about the world. These beliefs may be incorrect and hence cannot be considered as knowledge. By receiving new arguments from other agents, an agent may alter its beliefs but this still does not guarantee that these beliefs are true; they are only well supported by arguments.

A formal framework for generating IBR with argumentation: We now present a formal framework for IBR grounded in a specific theory of argumentation and situated in a cooperative multi-agent environment. In practical reasoning, it is essential to distinguish between arguing over beliefs and arguing over goals or desires. In argumentation theory, a proposition is believed because it is acceptable and relevant to a certain degree. Desires, on the other hand, are adopted because they are justifiable and may be influenced by some preference criteria. A desire is justifiable because the world is in a particular state that warrants its adoption. Finally, a desire is achievable if the agent has an achievable plan that achieves that desire. Such a desire becomes an intention or goal. As a consequence of the different nature of arguments for beliefs and desires, we need to treat them differently, taking into account the different way these arguments relate to one another. Further, these beliefs, desires and the related, supporting arguments can be used to generate an interesting recommendation or even to defeat one. To deal with the different nature of the arguments involved, we present three distinct argumentation frameworks: one for reasoning about beliefs, another for arguing about what desires should be pursued and a third for arguing about the best plan to intend in order to achieve these desires. The first framework is based on existing literature on argumentation over beliefs, originally proposed by Dung (1995) and later extended by Rahwan (2004). For arguing about desires and plans, we work on argumentation-based approach for reasoning by Rahwan and Amgoud (2007). We refine and extend existing approaches by providing means for comparing arguments for recommendation based on the content like current user’s preferences and certainty value. Thus, the worth (certainty and preference) of desires and beliefs are integrated into the argumentation frameworks and taken into account when comparing arguments.

| |

| Fig. 2: | Resolving conflict by one or both agents changing their beliefs, resulting in different desires altogether |

Preliminaries: Here, we start by presenting the logical language which will be used throughout the framework, as well as the different mental states of the agents (their bases).

Let L be a propositional language, ![]() stands for classical inference and ≡ for logical equivalence. From L we can distinguish the three following sets of formulas:

stands for classical inference and ≡ for logical equivalence. From L we can distinguish the three following sets of formulas:

| • | The set D which gathers all possible desires of agents |

| • | The set K which represents the knowledge |

| • | The set RES which contains all the available resources in a system |

From the above sets, two kinds of rules can be defined: desire-generation rules and planning rules. We also define the basic beliefs of an agent.

Definition 1 (Basic beliefs): An agent's basic beliefs is a set Bb = {(βi, ai, bi); i = 1, …, n} where, βi is a consistent propositional formula of L , ai its degree of certainty and bi its preference as per the agent. The degree of certainty and preference is required in order to generate an ordering over arguments which is required by the underlying argumentation theory. Since we wish to propose an argumentation framework suitable for generating interesting recommendations for the user, therefore it must take the user’s preferences bi under consideration. However, in case the preference criteria is not required, then the component can be equalized and its value be set to bi = 1. This way preference will not affect decision-making.

Definition 2 (Desire-Generation Rules or DGR): A desire-generation rule (or a desire rule) is an expression of the form (Rahwan and Amgoud, 2007):

where,∀φiεK and ∀Ψi, εD.

The meaning of the rule is “if the agent believes φ1,..., φn and desires ψ1,..., ψm, then the agent will desire ψ as well”.

Definition 3 (Planning rules): A planning rule is an expression of the form (Rahwan and Amgoud, 2007):

where, ∀φiεD, φεD and ∀riεRES.

A planning rule expresses that if φi,..., φn are achieved and the resources r1,..., rm are used then φ is achieved.

Let DGR and PR be the set of all possible desire generation rules and planning rules, respectively. Each agent is equipped with four bases: a base Bb containing its basic beliefs, a base Bd containing its desire-generation rules, a base Bp containing its planning rules and finally a base R which will gather all the resources possessed by that agent.

Definition 4 (Agent’s bases): An agent is equipped with four bases <Bb, Bd, Bp, R>:

| • | Bb = {(βi, ai, bi): βiεK, ai , biε [0, 1], i = 1,... , n}. Triplet (βi, ai, bi) means belief βi is certain at least to degree ai and βi is preferred up to degree bi by an agent |

| • | Bd = {(dgri, wi, pi) : dgri εDGR , wi εR, i = 1,..., m}. Symbol wi denotes the weight of the desire ψ generated by the rule dgri and pi denotes the preference for that desire. Let Weight (ψ) = wi and Preference (ψ) = pi. In the proposed framework the worth i.e., Worth (ψ) of a preferred desire depends on both, the certainty and preference associated with its antecedents. Therefore,: |

|

where,

| • | Bp = {pri : priεPR , i = 1,..., l} |

| • | R = {ri, i = 1,…, n} |

where riεRES. These resources appear in the plan and represent the material required to be consumed for satisfying a related desire.

Using desire-generation rules, we can characterise potential desires as well.

Definition 5 (Potential desire): The set of potential desires of an agent is given by:

These are “potential” desires because the agent does not know yet whether the antecedents (i.e., bodies) of the corresponding rules are true or not. To reason about their truthfulness explanatory arguments are defined. After a potential desire is said to be true, its worth is also calculated as stated above.

Argumentation for deducing beliefs: In this section, we present an extended framework for arguing about beliefs based on the study of Rahwan (2004). Present proposed framework gives due consideration to the user’s preferences and certainty of the propositions during the process of belief deduction by either agents (user or the recommender).

Definition 6 (Belief argument): A belief argument A is a pair A =<H, h>such that:

| • | H⊆Bb |

| • | H is consistent |

| • | H |

| • | H is minimal (for set⊆) among the sets satisfying conditions 1, 2, 3. The support of the argument is denoted by SUPP (A) = H. The conclusion of the argument is denoted by CONC (A) = h. Ab stands for the set of all possible belief arguments that can be generated from a belief base Bb |

The force of an argument can rely on the information from which it is constructed. Belief arguments involve only one kind of information: the beliefs. In the case of an argument-based framework for IBR, an agent’s own beliefs are influenced by its preferences as well. Thus, the arguments using more certain beliefs with higher preferences are found stronger than arguments using less certain beliefs with a lower preference. A certainty level is then associated with each argument. That level corresponds to the less entrenched belief say min (ai) used in the argument. This is done to avoid wishful thinking on an agent’s part. Further, if the min (ai)<δ, a required certainty threshold value for a belief (let δ = 0.5), then the argument is identified as a weaker argument and hence its certainty and preference level is reduced more as the level now equals to min (ai) *min (bi) where, the latter one corresponds to the least preferable belief used in the argument. In the other case where min (ai)>δ, then the argument is identified as a stronger one and hence it is made stronger as its certainty level equals to min (ai) *max (bi). This behavior of the definition can be controlled by δ, a required certainty threshold value for a belief.

Definition 7 (Certainty level): Let A = <H, h>εAb. The certainty level of A is:

|

The different forces of arguments make it possible to compare pairs of arguments. Indeed, higher the certainty level of an argument is, the stronger that argument is. Formally:

Definition 8 (Comparing arguments): Let A1, A2εAb. The argument A1 is preferred to A2, denoted A1![]() A2, if and only if Level (A1) = Level (A2). Preference relations between belief arguments are used not only to compare arguments in order to determine the “best” ones but also in order to refine the notion of acceptability of arguments. Since a belief base may be inconsistent, then arguments may be conflicting.

A2, if and only if Level (A1) = Level (A2). Preference relations between belief arguments are used not only to compare arguments in order to determine the “best” ones but also in order to refine the notion of acceptability of arguments. Since a belief base may be inconsistent, then arguments may be conflicting.

Definition 9 (Conflicts between belief arguments): Let A1 = <H1, h1>, A2 =<H2, h2>εAb.

| • | A1 undercuts A2 if ∃ h’2εH2 such that h1 = |

| • | A1 rebuts A2 if h1 = |

| • | A1 Attacksb A2 iff A1undercuts A2 or A1 rebuts A2 and not (A2 |

Having defined the basic concepts, we now define the argumentation system for handling belief arguments.

Definition 10 (Belief argumentation framework): An argumentation framework AFb for handling belief arguments is a pair AFb = <Ab, Attacksb> where, Ab is the set of belief arguments and Attacksb is the defeasibility relation between arguments in Ab.

Since arguments are conflicting, we must know what the acceptable arguments are. Beliefs supported by such arguments will be inferred from the base Bb.

We now define the notion of defense in the belief arguments before proceeding to acceptability.

Definition 11 (Defence): Let S⊆Ab and A1εAb. S defends A1 iff for every belief argument A2 where, A2 attacksb A1, there is some argument A3 Eε such that A3 attacksb A2. Therefore, an argument is acceptable either if it is not attacked, or if it is defended by acceptable arguments in the set S.

Definition 12 (Acceptable belief argument): A belief argument AεAb is acceptable with respect to a set of arguments S⊆Ab if either (Rahwan, 2004):

| • | |

| • | ∀A1εS such that A1 attacksb A, we have an acceptable argument A2εS such that A2 attacksb A1 |

This recursive definition enables us to characterize the set of acceptable arguments using a fixed-point definition.

Proposition 1: Let AFb = <Ab, Attacksb> be an argumentation framework and let F be a function such that F (S) = {AεAb: S defends A}. The set Acc (Ab) of acceptable belief arguments is defined as: Acc (Ab) = ∪Fi≥0 (Φ).

Proof: Due to the use of propositional language and finite bases, the argumentation system is finitary, i.e., each argument is attacked by a finite number of arguments. Since the argumentation system is finitary then the function F is continuous. Consequently, the least fixpoint of F is ∪Fi≥0 (Φ). The set Acc (Ab) contains non-attacked arguments as well as arguments defended directly or indirectly by non-attacked ones.

Argumentation for generating desires: Amgoud and Kaci (2005) introduced explanatory arguments as a means for generating desires from beliefs only. They used only certainty values for evaluation of belief arguments. Rahwan and Amgoud (2007) extended their framework by defining separate argumentation frameworks for belief and desire. They also gave DGR (Desire Generation Rules) which could be generated from beliefs as well as desires of an agent. They used explanatory arguments to justify the desires generated from the beliefs and the existing or newly generated desires. They used certainty values of beliefs and desires for evaluation of an explanatory argument. We extend their work by introducing recommendation arguments as a means for generating desires from beliefs and desires (due to an explanation or recommendation) which can be influenced by the user’s preferences. A recommendation argument gives due consideration to both: the certainty value and the user’s preference. This provides an advantage for the recommender agent which can now generat interesting recommendations for the user. The recommendation generated by these arguments will be both feasible and worthy for the user. This is due to the way both certainty and preference is taken care of in such cases (definition 7). For details on a simple explanatory argument refer (Amgoud and Kaci, 2005; Rahwan and Amgoud, 2007). We now present argumentation for generating interesting desires considering preferences.

Arguing over desires: In what follows, the functions BELIEFS (A), DESIRES (A) and CONC (A) return, respectively for a given argument A, the beliefs used in A, the desires supported by A and the conclusion of the argument A.

Definition 13 (Recommendation argument): Let <Bb, Bd>two bases.

| • | If ∃(⇒Φ)εBd then ⇒Φ is a recommendation argument (A) with: BELIEFS (A) = Φ; DESIRES (A) = {Φ}; CONC (A) = Φ |

| • | If B1,..., Bn are belief arguments and E1,..., Em are explanatory arguments and R1,..., Rp are recommendation arguments and ∃CONC (B1) ∧...∧CONC (Bn)∧CONC (E1)∧...∧CONC (Em) ∧CONC (R1) ∧...∧CONC (Rp)⇒ψεBd then B1,..., Bn, E1,..., Em, R1,..., Rp⇒ψ is a recommendation argument (A) with: |

| • | BELIEFS (A) = SUPP(B1)∪..∪SUPP (Bn)∪BELIEFS (E1)∪...∪BELIEFS (Em)∪BELIEFS (R1)∪... ∪BELIEFS (Rp) |

| • | DESIRES(A) = DESIRES (E1)∪...∪DESIRES (Em)∪DESIRES (R1)∪...∪DESIRES (Rp)∪{ψ}; and CONC(A) = ψ; and |

| • | TOP(A) = CONC (B1)∧...CONC (Bn)∧CONC (E1)∧... ∧CONC (Em)∧CONC (R1)∧...∧CONC (Rp)⇒ψ is the TOP rule of the argument |

Let Ar denote the set of all recommendation arguments that can be generated from <Bb, Bd>, Ab is the set of all belief arguments (definition 6) and Ad is set of all explanatory arguments, then A = Ar∪Ab∪Ad.

Definition 14 (The force of recommendation arguments): Let AεAr be a recommendation argument. The force of A is Force (A) = <Level (A), Worth (A)> where,

| • |  |

| • | If BELIEFS (A) = Φ then Level (A) = 1; |

| • | Worth (A) = wi * pi such that (TOP (A), wi, pi)εBd. (wi, pi are defined as per definition 4) |

In order to avoid any kind of wishful thinking, belief arguments are supposed to take precedence over explanatory and recommendation ones. We consider preference value for belief and desires so that interesting recommendations can be generated. Formally:

Definition 15 (Comparing mixed arguments): ∀A1εAb , ∀A2εAd and ∀A3εAr , it holds that A1 is preferred to A2 as well as A3, denoted A1![]() A2 and A

A2 and A![]() A3. ∀A2εAd and ∀A3εAr we have A3

A3. ∀A2εAd and ∀A3εAr we have A3![]() A2 if p3> w2 else vice versa. Here, p3 refers to preferred weight of an argument in A3 whereas w2 is weight of an argument in A2.

A2 if p3> w2 else vice versa. Here, p3 refers to preferred weight of an argument in A3 whereas w2 is weight of an argument in A2.

Concerning recommendation arguments, one may choose an argument which will, for sure, justify an important and preferable desire. This suggests the use of a conjunctive combination of the certainty level of the argument and its weight influenced by the preference. However, a simple conjunctive combination is open to discussion since it gives an equal weight to the preference of the desire and to the certainty of the set of beliefs that establishes that the desire takes place. Since beliefs verify the validity of desires, it is important that beliefs take precedence over the desires. This is translated by the fact that the certainty level of the argument is more important than the priority of the desire. Formally:

Definition 16 (Comparing recommendation arguments): Let A1, A2εAr. A1 is preferred to A2, denoted by A1![]() A2 , if:

A2 , if:

| • | Level (A1)>Level (A2), or |

| • | Level (A1) = Level (A2) and Worth (A1)>Worth (A2) (from definition 14) |

A recommendation argument for some desire can be defeated either by a belief argument (which undermines the truth of the underlying belief justification), or by another explanatory or recommendation argument (which undermines one of the existing desires the new desire is based on).

Definition 17 (Attack among recommendation and belief arguments): Let A1εAr and A2εAb.

| • | A2 b-undercuts A1 iff ∃h1 εBELIEFS (A1) such that CONC (A2) = ¬h1 |

| • | A2 d-undercuts A1 iff ∃h1 εDESIRES (A1) such that CONC (A2) = ¬h1 |

| • | Argument A’εA attacksr A1εAr if A’ b-undercuts or d-undercuts A1 and not (A1 |

Definition 18 (Attack among explanatory and recommendation arguments): Let A1εAr, A2εAd.

| • | A2 b-undercuts A1 iff ∃ h1 εBELIEFS (A1) such that CONC (A2) = ¬h1 |

| • | A2 d-undercuts A1 iff ∃ h1 εDESIRES (A1) such that CONC (A2) = ¬h1 |

| • | An argument A’εA attacksr A1εAr iff A’ b-undercuts or d-undercuts A1 and not (A1 |

Now that we have defined the notions of argument and defeasibility, attack relations, we define the argumentation framework that should return the justified/valid desires.

Definition 19 (Argumentation framework): An argumentation framework AFr for handling recommendation arguments is a tuple AFr = <Ab, Ad, Ar, Attackb, Attackd, Attackr>where, Ab is the set of belief arguments, Ad the set of explanatory arguments, Ar the set of recommendation arguments and attackb is the defeasibility relation between arguments in Ab, attackd is the defeasibility relation between arguments in Ad and attackr is the defeasibility relation between arguments in Ar.

The definition of acceptable recommendation arguments is based on the notion of defence. Unlike belief arguments, a recommendation argument can be defended by either a belief argument, an explanatory argument or a recommendation argument itself. Formally:

Definition 20 (Defence among recommendation, explanatory and belief arguments): Let S⊆A and argument AεA. S defends argument A if A’εA where, A’w Attacksb (or attacksd or attacksr) A, there is some argument A”εS which attacksb (or attacksd or attacksr) A’. F’ is a function such that F’ (S) = {AεA such that S defends A}.

Proposition 2: Let AFr =<Ab, Ad, Ar, Attackb, Attacksd or Attacksr>be an argumentation framework. The set Acc (Ad) of acceptable explanatory arguments is defined as:

Proposition 3: Let AFr =<Ab, Ad, Ar, Attackb, Attacksd or Attacksr>be an argumentation framework. The set Acc (Ar) of acceptable recommendation arguments is defined as:

Proof: Due to the use of propositional language and finite bases, the argumentation system is finitary, i.e., each argument is attacked by a finite number of arguments. Since the argumentation system is finitary then the function F’ is continuous. Consequently, the least fixpoint of F’ is ∪F’i≥0 (Φ).

Proposition 4: Let AFr =<Ab, Ad, Ar, Attackb, Attacksd or Attacksr>be an argumentation framework:

Proof: This follows directly from the definitions of F, F” and F’ and the fact that belief arguments are not attacked by explanatory or recommendation arguments since we suppose that belief arguments are preferred to other ones.

Definition 21 (Justified desire): A desire ψ is justified iff ∃ AεAr such that CONC (A) = ψ and AεAcc (Ar).

Desires supported by acceptable recommendation arguments are justified and hence the agent will pursue such recommendations (if they are achievable, that is if a plan exists for them).

Argumentation for planning: In the previous subsection, we presented a framework for arguing about desires and producing a set of justified desires. Planning is a substantial and well-developed area in AI. The aim of this study is not to propose a novel planning framework here. Instead, we intend to use the notion of the instrumental argument (Rahwan, 2004) to capture dependencies of interest between low-level goals and higher-level goals/desires so as to enable an interest-based recommendation dialogue based on the proposed argumentation frameworks. The argumentation-based framework is used for generating non-conflicting plans for achieving a (sub) set of such desires. We modify the way utility of instrumental arguments is calculated, so as to accommodate certainty and user’s preference in determining the best plan. This would further affect the way conflicts are resolved and the final intention set is generated. We now define the notion of planning rule which is the basic building block for specifying plans.

Definition 22 (Partial plan): A partial plan is a pair [H, Φ] where,

| • | ΦεR and H = Φ, or |

| • | ΦεD and H = {φ1,..., φn, r1,..., rm} such that ∃φ1∧...∧φn∧r1...∧rm |

| • | A partial plan [H, φ] is elementary iff H = Φ. |

Definition 23 (Instrumental argument, or complete plan for recommendation): An instrumental argument is a pair <T, d> such that dεD and T is a finite tree such that (Rahwan, 2004):

| • | The root of the tree is a partial plan [H, d] |

| • | A node [{φ1,...,φn, r1..., rm}, h’] has exactly n + m children [H’1 ,φ1],…[H’n,φn], [Φ, r1],... [Φ, rm] where each [H’i, Φi], [Φ, rk] is a partial plan |

| • | The leaves of the tree are elementary partial plans |

Nodes (T) is a function which returns the set of all partial plans of tree T, Des (T) is a function which returns the set of desires that plan T achieves and Resources (T) is a function which returns the set of all resources needed to execute T.

Let Ap denotes the set of all instrumental arguments that can be built from agent’s bases. An instrumental argument (a necessary sub-goal for realization of a super-goal) may achieve one or several desires of different worth (certainty and preference) required for the complete execution of a recommendation plan. So the strength of that argument is the “benefit” or “utility” which is the cumulative worth of the desires (sub-goals) essential in the realization of the plan. Formally:

Definition 24 (Strength of instrumental arguments): Let A = <T, d> be an instrumental argument. The utility of A is given as:

In the study of Amgoud (2004), it has been shown that there are four families of conflicts between partial plans. In fact, two partial plans [H1, φ1] and [H2, φ2] may be conflicting for one of the following reasons:

| • | Desire-desire conflict, i.e., {φ1}∪{φ2} |

| • | Plan-plan conflict, i.e., H1∪H2 |

| • | Consequence-consequence conflict, i.e., the consequences of achieving the two desires h1 and h2 are conflicting |

| • | Plan-consequence conflict, i.e., the plan H1 conflicts with the consequences of achieving h2 |

The above conflicts are captured when defining the notion of conflict-free sets of instrumental arguments for recommendation.

Definition 25 (Conflict-free sets of instrumental arguments): Let S⊆Ap. S is conflict-free, with respect to the agent’s benefits Bb iff ∃B’![]() Bb such that:

Bb such that:

| • | B’ is consistent and |

| • | ∪<T, d >εS [∪[H, φ] εNodes (T) (H∪{φ})]∪B’ |

As with belief and recommendation arguments, we now present the notion of an acceptable set of instrumental arguments.

Definition 26 (Acceptable set of instrumental arguments): Let S⊆Ap. S is acceptable iff:

| • | S is conflict-free |

| • | S is maximal for set inclusion among the sets verifying the above condition |

| • | S being maximal ensures that there are not any redundant plans for the same desire and each set of acceptable instrumental arguments contains at most one plan per desire. Formally: |

Let S1,..., Sn be the different acceptable sets of instrumental arguments.

Definition 27 (Achievable and justified desire): Let S1,..., Sn be the different acceptable sets of instrumental arguments. A desire ψ (recommended or otherwise) is achievable if:

Definition 28 (Utility of set of instrumental arguments): For an acceptable set of instrumental arguments S = {A1,..., Am} = {<T1, ψ1>,... , <Tm, ψm>}, the set of all desires achieved by S are as follows:

The utility of a set of arguments S is:

We can now construct a complete pre-ordering on the set {S1,..., Sn} of acceptable sets of instrumental arguments. The basic idea is to prefer the set with a maximum average utility.

Definition 29 (Preferred set): Let S1,..., Sn be the acceptable sets of instrumental arguments. Si is preferred to Sj if Utility (Si) ≥ Utility (Sj).

The above definition allows for cases where a set with a single desire/plan pair is preferred to another set with two or more desire/plan pairs for achieving some goal (because utility achieved by the former desire/plan pair is higher than the other two when we consider the maximum average utility).

The (justified and achievable) desires will form the intentions of the agent. These desires are supported by a belief argument or an acceptable recommendation argument or an acceptable explanatory argument.

Definition 30 (Intention set): Let ID⊆PD. ID is an intention set iff:

| • | ∀ diεID, di is justified and achievable |

| • | ∃ Slε{S1,..., Sn} such that ∀di ε ID, ∃<Ti, di>εSl |

| • | ∀ Sk≠Sl with Sk satisfying condition 2, then Sl is preferred to Sk |

| • | ID is maximal for set inclusion among the subsets of PD satisfying the above conditions |

The second condition ensures that all desires are achievable together. If there is more than one intention set, a single one must be selected to become the agent’s intention. The chosen set ID is denoted by I.

Looking at the argumentation framework for IBR, we can now observe that how the various arguments (belief, explanatory and recommendation) affect the mental attitudes (beliefs, desires and intentions) of the agents. Referring to definitions 12, 21, 26 (for example) which give the acceptability criteria for an argument, shows that an accepted argument maintains consistency in the bases. By defining defeasibility relations between arguments we show how a stronger argument attacks a weaker one, leading to changes in the beliefs, desires and intentions to keep the bases consistent. Finally, a change in the mental attitudes of an agent then leads to changes in the plans as well. Therefore, our proposed framework serves two purposes. Firstly, it considers user’s preferences along with the certainty value to evaluate an argument and generate an interesting recommendation. Secondly, it is able to affect and change the mental attitudes of an agent, hence convincing an agent to change its plans and intentions in order to achieve the most favourable outcome if any.

A worked example: The examples detailed below, puts the above concepts together. We can now analyze how the various arguments (belief, explanatory and recommendation) affect the mental attitudes (beliefs, desires and intentions) of the agents. The illustrative examples are built on previously mentioned examples 1a to 5a. We use the logical language L which is used throughout the framework. Let L be a propositional language, its logic is an argument-based non-monotonic logic. We shall present the various examples progressively.

Let the following constructs denote sentences in natural language:

| • | hig = “holiday in Goa” |

| • | hik = “holiday in Kanyakumari” |

| • | him = “holiday in Manali” |

| • | wgv = “want to go for vacations” |

| • | payt = “ pay for air tickets” |

| • | fdate = “12- Dec-2011” |

| • | tdate = “17- Dec-2011” |

| • | wcv = “want a cheap vacation” |

| • | wsx = “to view the mortal remains of St. Francis Xavier” |

| • | sxobj =“the mortal remains of St. Francis Xavier in Basilica de Bom Jesus Church is displayed every 10 years on December and January” |

| • | sxnd =“the mortal remains of St. Francis Xavier in Basilica de Bom Jesus Church is not at display this year in Dec” |

| • | sxcg =“Basilica de Bom Jesus Church is under renovation” |

| • | Let the resources be RES = {p200, cag, cak} |

| • | p200 = “pay $200 for vacations ” |

| • | cag = “cost of air tickets to Goa $300 ” |

| • | cak = “cost of air tickets to Kanyakumari $200” |

| • | Initializing the bases : <Bub , Brb>, are the belief base of agent U and agent R, respectively. Bub = {(payt, 1, 0), (fdate, 0.6, 0.9). (tdate, 0.6, 0.9), (sxodj, 0.8, 0), (wcv, 0.9, 1), (wgv, 1, 1)} Brb = {(sxnd, 1, 0), (sxcg, 1, 0), (cag, 1, 0), (cak, 1, 0)} |

IBR example 1b: Now let;

| • | Bub = {(payt, 1, 0), (fdate, 0.6, 0.9). (t date, 0.6, 0.9), (sxodj, 0.8, 0), (wcv, 0.9, 1), (wgv, 1, 1)} |

| • | Bud = {(hig, 0.9, 0.9), (hik, 0.7, 0.6), (him, 0.4, 0.0), (wsxb, 0.5, 0.9), (p200, 0.9, 1)} |

| • | The agent can now construct the desire and the related arguments to go Goa for vacation: |

| • | B1 = <{wgv}, wgv> |

| • | Bd = {(wgv → hig)} |

| • | A1: B1⇒ hig, (1*1+0.9*0.9)/2 = 0.905 |

| • | With BELIEFS (A1) = {wgv}, DESIRES (A1) = {hig}, CONC (A1) = {hig}. |

| • | Consider the following DGR along with their worth. Calculation of worth includes the user preference as given in definition 4: |

| • | (wgv⇒ hig, 0.905); |

| • | (hig∧p200⇒ wcv, 0.87); |

| • | (wgv ⇒ hik, 0.71); |

| • | (wgv ⇒ him, 0.5). |

But cag⇒![]() (hig∧p200) conflicts the user’s desire to visit Goa in $200. This leads to a situation of no solution as explained in example 1a. This results in conflicting of a belief argument A1.

(hig∧p200) conflicts the user’s desire to visit Goa in $200. This leads to a situation of no solution as explained in example 1a. This results in conflicting of a belief argument A1.

IBR example 5b: Extending the example 1b let the user express the desire of visiting the mortal remains of St. Francis Xavier along with a vacation in Goa.

| • | Therefore, (sxodj∧hig ⇒wxsb, 0.69) |

| • | But the agent R (recommender) has information in the belief base, where it is mentioned that “Basilica de Bom Jesus Church is under renovation this year”, therefore, the agent U will not be able to visit the mortal remains of St. Francis Xavier in it |

| • | Hence, the agent R contains the belief rules: (sxcg→sxnd, 1) (sxnd→¬sxodj, 1) |

| • | With this new information the bases are as follows: |

| • | Bb: {(wgv,1,1), (sxodj, 0.8, 0), (sxcg→sxnd, 1), (sxnd→¬sxodj, 1)} |

| • | Bd: {(wgv ⇒hig, 0.905), (sxodj∧hig ⇒ wxsb, 0.69)} |

The following argument can be built:

| • | B2: <{sxcg, sxcg→sxnd, sxnd→¬sxodj} sxnd, ¬sxodj > |

| • | A2: A1 ⇒wxsb |

| • | A3: B2 ⇒ |

It is clear that the argument A3 b-undercuts the argument A2 since sxodj ε BELIEFS (A2) and CONC (A3) = ¬sxodj.

Therefore, the agent R is able to inform agent U that visiting the mortal remains of St. Francis Xavier in Basilica de Bom Jesus Church is not possible. Therefore, this shows how the user reveals more information about his goals. Hence, the recommender is able to provide correct and convincing suggestion to the user / buyer. This example shows how the goals can be influenced (as in example 5a) to resolve a conflict if an underlying desire is known.

Extending IBR example 5b: Since agent’s desire wsxb is now defeated, therefore the recommender agent R generates recommendation arguments to suggest that agent user should change its holiday destination to Kanyakumari and an argument is generated to adopt goal hik which is then compared to the goal of achieving hig. Now, since ¬ sxodj is added to agent’s belief base (changes are added), therefore, we have:

| • | Bb: {(wgv, 1, 1), (sxodj, 0, 0), (hig, 0.9, 0.9), (hik, 0.7, 0.6), (¬sxodj, 1, 0), (wgv→hik, 0.7, 0.6), (¬sxodj→¬wsxb, 1, 0), (hig→wsxb, 0.63, 0), (wgv→hig, 0, 0.9)} |

| • | B3: <{¬wsxb, ¬wsxb→¬hig}, ¬hig> |

| • | Level (B3) = 0.63*1 = 0.63(from definition 7) |

| • | A4: B3, A3 ⇒hik (as wgv→ hig is defeated by B3) |

Therefore, A4 is a recommendation argument with force (A4) = <level (A4), worth (A4)> which equals <0.63, 0.63> and it defeats another argument (wgv → hig, 0, 0.9) with certainty level as 0. This argument is defeated because the level (A4) = 0.63>0, hence the recommendation argument to change the holiday destination to Kanyakumari i.e. to adopt the goal hik is stronger than the user’s earlier goal of hig (which is uncertain now due to changes in the belief base Bb). Hence the conflict due to desire was resolved by affecting the user’s belief of wsxb as in example 5a.

EXPERIMENTAL RESULTS

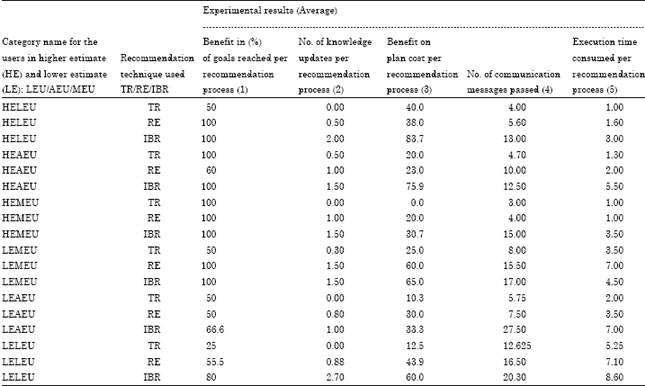

In order to evaluate and characterize the hypothetical benefits of using IBR we have conducted several random simulation runs between a user agent and an interest-based recommender agent based on a travel recommender case study. We used various categories of users (less experienced user (LEU); average experience user (AEU); more experienced user (MEU)) and different levels of their estimates (higher estimates (HE); lower estimates (LE)) that they can have about a commodity. This resulted in six categories of users named as: HELEU, HEAEU, HEMEU, LEMEU, LEAEU and LELEU. The simulations were conducted using randomly generated input domains and different recommendation methods were used (Table 1). These were traditional recommendation (TR) where recommendations are given based on user requirements without any justification unlike the method recommendation with explanation (RE). The third method was the proposed IBR, where recommendations were not only accompanied with a justification but the agents interacted in order to discover underlying motives and reason behind decisions. For a TR agent there was no argument generation for supporting recommendations. In case of a RE agent, only belief and explanation arguments were generated whereas all the types of arguments including recommendation and instrumental arguments were generated only by an IBR agent (refer to section 4.5 for argumentation frameworks). A user having a Higher Estimate (HE) is actually overestimating the real cost of a commodity and hence is ready to pay for more than its worth. On the other hand, those having a Lower Estimate (LE) are underestimating the cost and hence want a commodity or service in an amount less than the possible one. Such customers/users are difficult to handle using Traditional Recommendation (TR) or even by recommendation with explanation (RE). That is because the users with LE may not agree to concede with their demands for quality and quantity even after explanations by a recommender. It is clear by this discussion that satisfying the users in the category LELEU was most challenging as they had less experience and also under estimated the products’ cost. Therefore, it required a more complex set of interactions to convince them about a decision and resolve conflicts using argumentation and practical reasoning. An IBR agent is equipped with such automated reasoning capabilities. Before we discuss the experimental results in detail, we give a snapshot (Fig. 3) showing working of an IBR agent while it is used to generate interest-based recommendations as explained above.

| |

| Fig. 3: | Snapshot of the IBR agent generating interesting recommendations with explanation |

| Table 1: | Experimental results of comparing various recommendation methods |

| |

The interaction between the two autonomous agents is shown on a Jason MAS console running the project named “ibr.mas2j”.

The difference in estimates given by different user categories ranges from-750 to +750. There are six categories of users each separated by a difference interval of 250 and ranging from-750 to +750. This is the difference in user estimates and the actual cost for traveling and the overall budget. Taking these input parameters, simulations were compared qualitatively and quantitatively. The main dimensions for qualitative comparisons were: the benefits in the percentage of goals reached (success/failure in acceptance of a recommendation) and number of knowledge updates (accepted new or modified information during dialogue between user and recommender agent in a recommendation process) by each recommendation method (TR/RE/IBR). The dimension for quantitative comparison was: benefit achieved on plan’s cost (advantage gained by the user over their estimates for a product in case of both underestimation and overestimation of cost) by each recommendation method. The dimensions for measuring system’s complexity statistically were: number of communication messages passed and execution time consumed per recommendation process by various recommendation methods. It was observed that there is higher uncertainty amongst users with lesser experience that is towards more negative and positive on the x-axis. This was due to higher differences in the expectations of probably a new user and actual cost of the commodity. As a result, there were lower chances of successful outcomes and user satisfaction whenever the recommender agent encountered a less experienced user or if there were changes in the market. But the performance of three methods was quite similar whenever user is ready to pay more in case of overestimation (observe the first three estimate categories for user) but the problem occurred when user wanted more for less in case of underestimation (observe the last three estimate categories for user). In such situations an IBR had advantage over other methods. In fact we observed that qualitative and quantitative satisfaction is higher for users using an IBR. The primary qualitative interest of the agents (achieving their goals successfully) got satisfied. As far as complexity is concerned (number of messages passed and execution time), there was not much difference between the methods TR, RE and IBR. Therefore, an IBR agent produced better results both qualitatively and quantitatively for different input domains. The reason is that it tends to probe into sub-goals if a user was not satisfied with the recommendations concerning its goals. The capability of an IBR agent to explore underlying motives and desires of a user agent resulted in achieving higher benefits (especially quantitatively) for both underestimation and over estimation categories.

IBR is not an isolated effort towards flexible recommendation systems. A recent research in recommender technologies involved in the development of an approach for integrating argumentation in recommendation systems using DeLP (Chesnevar et al., 2009). Our recommender systems can be seen as a particular instance of decision making systems oriented to assist human users in solving computer-mediated tasks with help of software agents. Different works have combined the ideas of argumentation and decision in artificial intelligence systems (Amgoud, 2009). Our framework uses argumentation for decision making and generating recommendations and it distinguishes between various knowledge elements (beliefs, desires, intentions, rules, plans). It uses argumentation in resolving conflicts due to preferences, beliefs, desires and intentions as well. The BDI-based IBRecommender agent used the proposed argumentation framework for practical reasoning. This works on top of a Java-based program responsible for producing recommendations using the hybrid approach. Present study contrasts with the work by Rahwan (2004) in the field of interest-based negotiation where agent preferences were not predetermined or fixed. In the present paper, we worked with recommendation scenarios in which agent preferences are not predetermined or fixed. During present experimental study we observed that there was higher uncertainty amongst users with lesser experience. This was due to higher differences in the expectations of a new user and actual cost of commodity. As a result there were lower chances of successful outcomes and user satisfaction whenever the recommender agent encountered a less experienced user. The performance of three methods (TR, RE and IBR) is quite similar whenever user is ready to pay more but the difference can be observed when user wants more for less. In such situations an IBR had advantage over other methods. It was observed that qualitative and quantitative satisfaction is higher for users using an IBR.

CONCLUSION AND FUTURE WORK

In this study, we worked with recommendation scenarios in which agent preferences are not predetermined or fixed. We argued that since preferences are adopted to pursue particular goals, one agent might influence another agent's preferences by discussing the underlying motivations for adopting the associated goals. Present work, concentrates on situations where the agents' limited knowledge of the domain and each other makes it essential for them to be, cooperative. We take the position that in some settings where agents have incomplete information, sharing the available information and the underlying motives may be more beneficial than hiding it. We take present day recommendation one step ahead, that is beyond the explicit user’s preference to the reasons, motives and interests lying behind it. Therefore, this paper proposed an argument-based framework for generating interest-based recommendations (IBR). The proposed framework has identified essential features required to enable IBR using argumentation among autonomous agents. It is used to specify agents that are willing to share their experiences with others truthfully. The use of argumentation allows enhancing multi-agent recommender systems with inference abilities to present the deeper motives and reasoned suggestions. The recommender agent could reason beyond certain user preferences in order to generate an interesting recommendation for the user. The framework have identified and deduced arguments for beliefs, desires and intentions behind the generated recommendations and user preferences. Different types of conflicts amongst the agents and ways of resolving them were also discussed.

The present IBR argumentation framework is based on certain assumptions: the agents are willing to share their experiences with others and are honest and cooperative in exchanging information with one another. As part of our future work we intend to enhance the proposed IBR framework in order to remove the above-mentioned assumptions. Lastly, our focus will be on the user evaluation and validation of the proposed argument-based framework for IBR using a case study based on a multi-agent environment.